K-Means & Other Clustering Algorithms: A Quick Intro with Python

Unsupervised learning via clustering algorithms. Let's work with the Karate Club dataset to perform several types of clustering algorithms.

LearnDataSci is reader-supported. When you purchase through links on our site, earned commissions help support our team of writers, researchers, and designers at no extra cost to you.

You should already know:

- Beginner Python

- Data science packages (pandas, matplotlib, seaborn, sklearn)

These can be learned interactively through DataCamp

You will learn:

- Clustering: K-Means, Agglomerative, Spectral, Affinity Propagation

- How to plot networks

- How to evaluate different clustering techniques

Clustering is the grouping of objects together so that objects belonging in the same group (cluster) are more similar to each other than those in other groups (clusters). In this intro cluster analysis tutorial, we'll check out a few algorithms in Python so you can get a basic understanding of the fundamentals of clustering on a real dataset.

Article Resources

- Source code: Github.

- Dataset: available via

networkxlibrary (see code below), also see paper: An Information Flow Model for Conflict and Fission in Small Groups

The Dataset

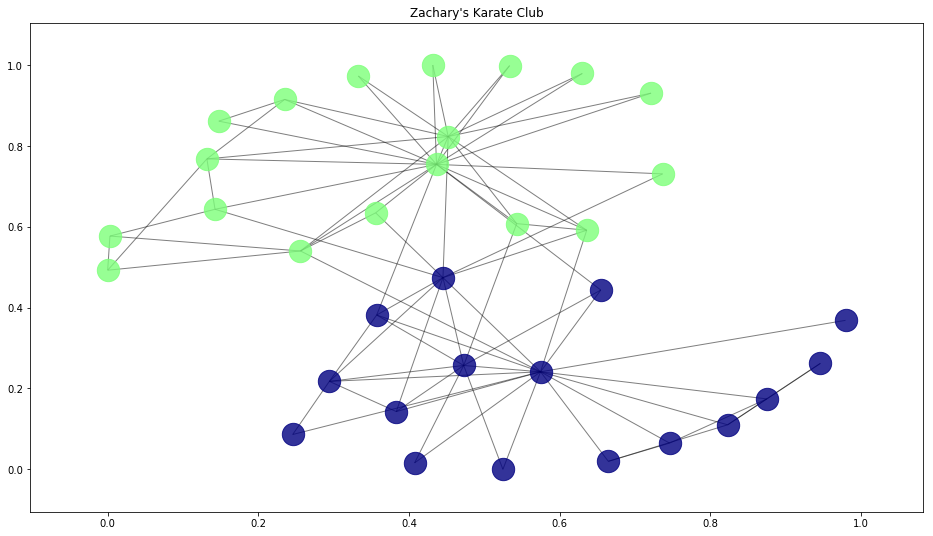

For the clustering problem, we will use the famous Zachary’s Karate Club dataset. For more detailed information on the study see the linked paper.

Essentially there was a karate club that had an administrator “John A” and an instructor “Mr. Hi”, and a conflict arose between them which caused the students to split into two groups; one that followed John and one that followed Mr. Hi.

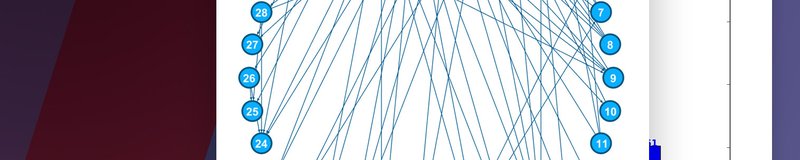

The students are the nodes in our graph, and the edges, or links, between the nodes are the result of social interactions outside of the club between students.

Since there was an eventual split into two groups (clusters) by the end of the karate club dispute, and we know which group each student ended up in, we can use the results as truth values for our clustering to gauge performance between different algorithms.

Getting Started with Clustering in Python

Imports for this tutorial

We'll be using the scikit-learn, pandas , and numpy stack with the addition of matplotlib, seaborn and networkx for graph visualization. A simple pip/conda install should work with each of these.

Let's go ahead and import the dataset directly from networkx and also set up the spring layout positioning for the graph visuals:

Let's go ahead a create a function to let us visualize the dataset:

In order to color the student nodes according to their club membership, we're using matplotlib's Normalize class to fit the number of clubs into the (0, 1) interval. You might be wondering why we need to do this when there's only two clubs that resulted from the study, but we'll find out later that some clustering algorithms didn't get it right and we need to be able to colorize more than two clubs.

To compare our algorithm's, performance we want the true labels, i.e. where each student ended up after the club fission. Unfortunately, the true labels are not provided in the networkx dataset, but we can retrieve them from the study itself and hardcode them here:

Now, let's use our draw function to visualize the true student club membership and their social connections:

This is the true division of the Karate Club. We'll see how our algorithms do when trying to cluster on their own below.

Before that, we need to preprocess the data by transforming the graph into a matrix since that is the format required for the clusterers. Remember this edge_mat variable below, we'll be using this for input to our clustering models in the next few sections:

Let's transform our graph into a matrix and print the result to see what it contains:

Clustering Algorithms

Unsupervised learning via clustering can work quite well in a lot of cases, but it can also perform terribly. We'll go through a few algorithms that are known to perform very well and see how the do on the same dataset. Fortunately, we can use the true cluster labels from the paper to calculate metrics and determine which is the best clustering option.

K-Means Clustering

K-means is considered by many to be the gold standard when it comes to clustering due to its simplicity and performance, so it's the first one we'll try out.

When you have no idea at all what algorithm to use, K-means is usually the first choice.

K-means works by defining spherical clusters that are separable in a way so that the mean value converges towards the cluster center. Because of this, K-Means may underperform sometimes.

To simply construct and train a K-means model, we can use sklearn's package. Before we do, we are going to define the number of clusters we know to be true (two), a list to hold results (labels), and a dictionary containing each algorithm we end up trying:

To fit this model, we could simply call algorithms['kmeans'].fit(edge_mat).

Since we'll be testing a bunch of clustering packages, we can loop over each and fit them at the same time. See below.

Agglomerative Clustering

The main idea behind Agglomerative clustering is that each node first starts in its own cluster, and then pairs of clusters recursively merge together in a way that minimally increases a given linkage distance.

The main advantage of Agglomerative clustering (and hierarchical clustering in general) is that you don’t need to specify the number of clusters. That of course, comes with a price: performance. But, in sklearn’s implementation, you can specify the number of clusters to assist the algorithm’s performance.

You can find a lot more information about how this technique works mathematically here.

Let's create and train an agglomerative model and add it to our dictionary:

Spectral Clustering

The Spectral clustering technique applies clustering to a projection of the normalized Laplacian. When it comes to image clustering, Spectral clustering works quite well.

Affinity Propagation

Affinity propagation is a bit different. Unlike the previous algorithms, this one does not require the number of clusters to be determined before running the algorithm.

Affinity propagation performs really well on several computer vision and biology problems, such as clustering pictures of human faces and identifying regulated transcripts, but we'll soon find out it doesn't work well for our dataset.

Let's add this final algorithm to our dictionary and wrap it all up by fitting each model:

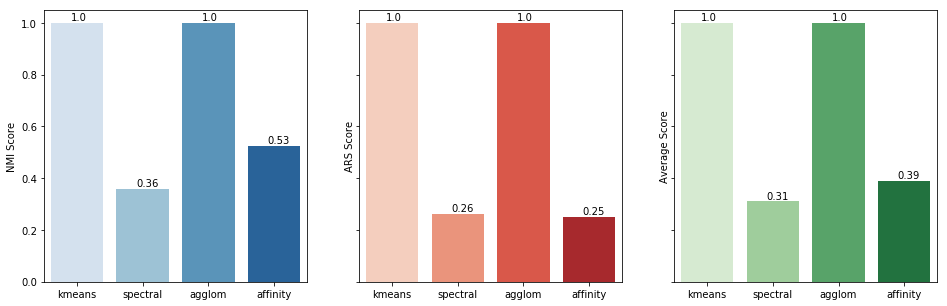

Metrics & Plotting

Well, it is time to choose which algorithm is more suitable for our data. A simple visualization of the result might work on small datasets, but imagine a graph with one thousand, or even ten thousand, nodes. That would be slightly chaotic for the human eye. So, let calculate the Adjusted Rand Score (ARS) and the Normalized Mutual Information (NMI) metrics for easier interpretation.

Normalized Mutual Information (NMI)

Mutual Information of two random variables is a measure of the mutual dependence between the two variables. Normalized Mutual Information is a normalization of the Mutual Information (MI) score to scale the results between 0 (no mutual information) and 1 (perfect correlation). In other words, 0 means dissimilar and 1 means

a perfect match.

Adjusted Rand Score (ARS)

Adjusted Rand Score on the other hand, computes a similarity measure between two clusters. ARS considers all pairs of samples and counts pairs that are assigned in the same or different clusters in the predicted and true clusters.

If that's a little weird to think about, have in mind that, for now, 0 is the lowest similarity and 1 is the highest.

In order to plot our scores, let's first calculate them. Remember that y_true is still a dictionary where the key is a student and the value is the club they ended up in. We need to get y_true's values first in order to compare them to y_pred:

Let's now plot both scores side-by-side along with their averages for a better comparison.

We're using Seaborn's barplot along with matplotlib just for simpler coloring syntax. Most of the following is pretty simple.

The only thing fancy we added was the text on top of the bars. This is achieved on each subplot by referencing each axes (subplot), then using the index (i) as the x-coordinate, the score (v) as the y-coordinate, followed by the rounded value of v as the actual text to show on top.

As you can see in the resulting chart, K-means and Agglomerative clustering have the best possible outcome for our dataset. That, of course, does not mean that Spectral and Agglomerative are low-performing algorithms, just that the did not fit in our particular dataset.

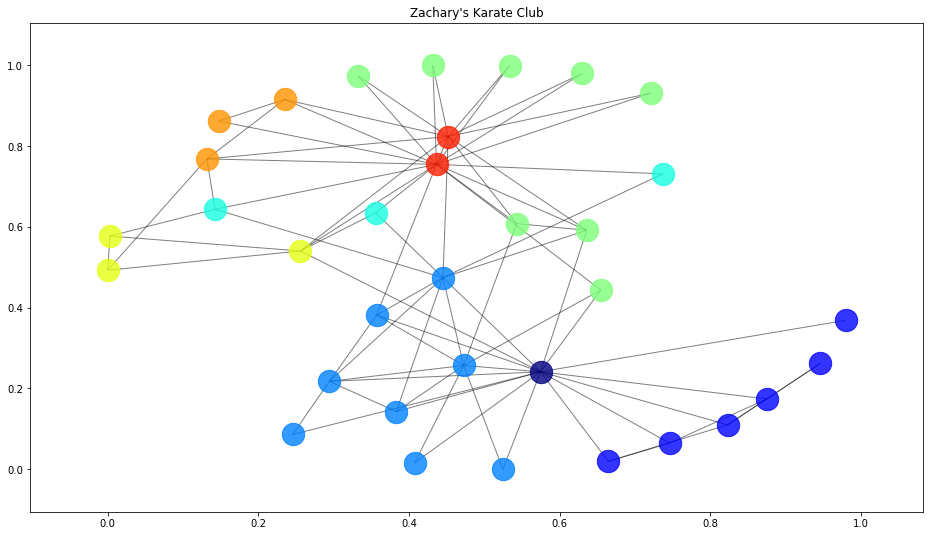

Out of curiosity, let's create a new function to plot where each algorithm went wrong by comparing the predicted student clusters with the true student clusters:

This graph depicts each algorithm's correct (green circle) and incorrect (black X) cluster assignments.

Interestingly, we see that although the Affinity algorithm had a higher average score, it only put one node in the correct cluster.

To see the reason why, we can look at the cluster information from that algorithm:

Since we were not able to define the number of clusters for Affinity Propagation, it determined that there were eight clusters. Our metrics didn't hint at this problem since our scoring wasn't based off of clustering accuracy.

Let's wrap it up but taking a look at what our Affinity algorithm actually clustered:

Final Words

Thanks for joining us in this clustering introduction. I hope you found some value in seeing how we can easily manipulate a public dataset and apply and compare several different clustering algorithms using sklearn in Python. If you're interested in getting a more in-depth, theoretical understanding of clustering, consider taking a machine learning course.

If you have any questions, definitely let us know in the comments below.

Continue learning

Machine Learning with Python — Coursera

Week four of this course has several great lectures about clustering to give you a more in-depth understanding.